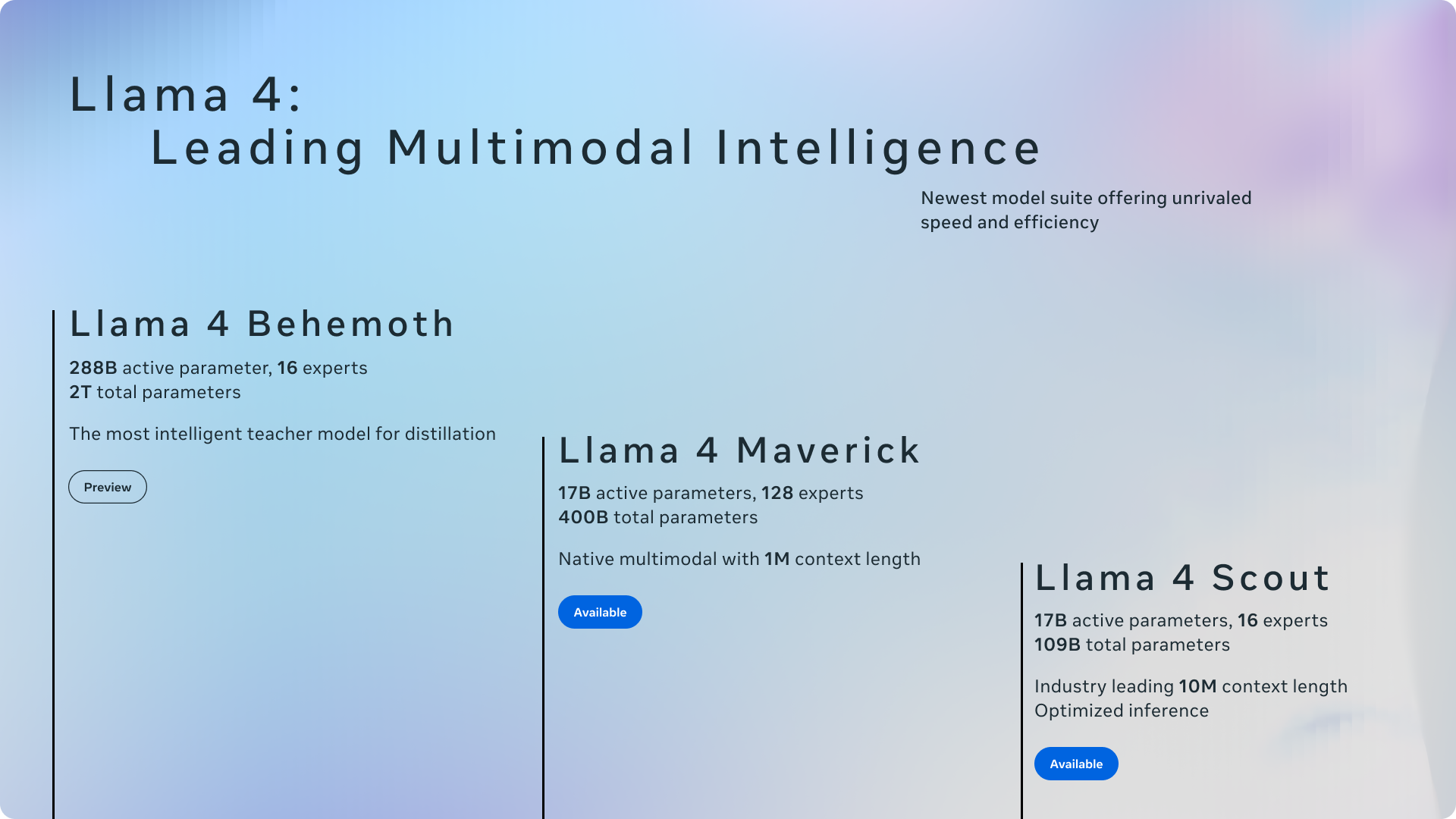

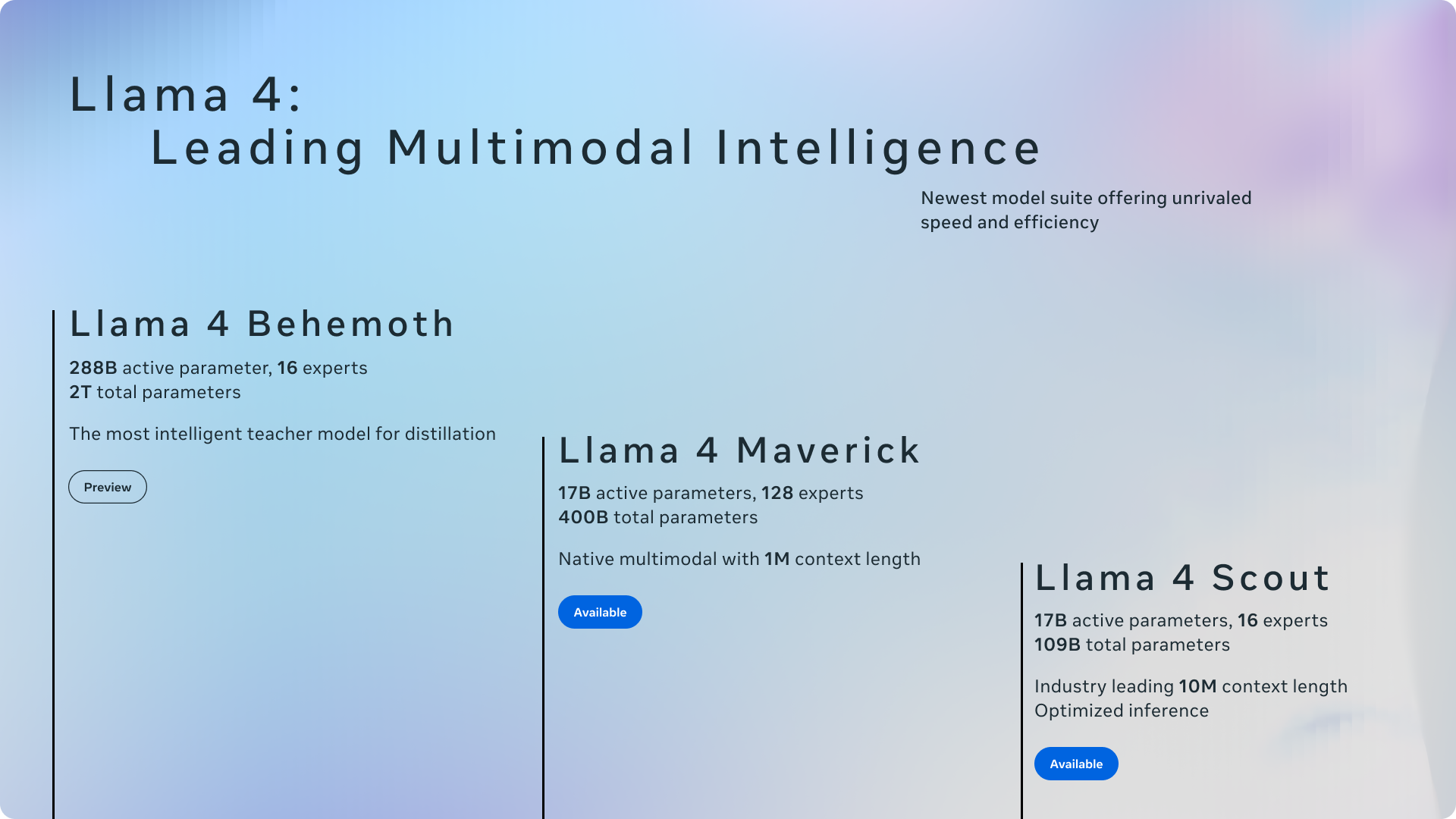

Meta introduces Llama 4 Scout and Llama 4 Maverick, the first open-weight natively multimodal models with unprecedented context support and their first built using a mixture-of-experts (MoE) architecture.

ai.meta •

Revision history

11 recorded changes

Want your article here?

Promote with Leviathan News